Tag: Bugs

Just Rebuild It – Tales from the Trek – We Don’t Need Requirements

Technology Upgrades – Self Documenting (?)

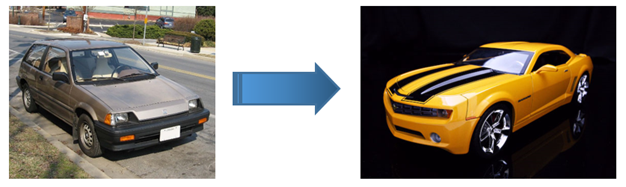

I’ve been on several projects where the goal is to replace an existing, mature system with a brand new system in a new technology. This is generally a huge investment in time and money, fraught with risk, and results in almost the same functionality. Still, it can be necessary due to the old software being dependent on unsupported or non-secure technology and exponentially increasing maintenance costs. In a way, it’s like making the decision to replace an old car rather than repair it.

On nearly every project of this type that I have experienced, the project sponsors have insisted that we could cut out, or at least drastically reduce, business analysis. Their reasoning was that because the new system simply had to work exactly like the old system, the new system was already “fully documented” in the form of the old system.

There are a number of reasons why assuming that the old system “fully documents” the new system is a mistake. I’ll address one of them here.

Rebuilding Bugs and Poor Functionality

Often, software that’s running on outdated, unsupported technology has a lot of bugs and poor functionality that haven’t been addressed. Any changes to the system have become risky because the system is so fragile. If the only direction given to the development team is to rebuild the existing system as is with no additional analysis, the team will literally be building the same bugs and functionality into the new system. There’s no good way for the development team to know if something should be changed without at least some investment in business analysis.

The end result is that the users and project sponsors are even more frustrated with their investment in new software only to end up with the same problems. Even worse, sometimes, building the bugs into the new system costs more money. Imagine how much more it would cost to try to literally rebuild an old car exactly as it is rather than build a new car with the same basic functionality. It would take effort to make the brakes squeak just like the old car, have it shake at high speeds just like the old car, and randomly stall in the middle of intersections just like the old car.

The money spent rebuilding old bugs would be much better spent on some business analysis.

Feedback

What challenges have you had with generating requirements when replacing existing systems? What actions have you tried to address the challenges? I’d love to hear your stories from the trek.

User Acceptance Testing Tales from the Trek – Writing a Perfect Bug

My early experiences running User Acceptance Testing (UAT) typically involved dealing with just 1 or 2 users. When they encountered an error, they’d show it to me, and I’d record it. However, as I gained experience in Quality Assurance, I worked on larger projects.

Training – How to write a bug

On my first larger project, I needed to train a group of 20 users who were going to perform User Acceptance Testing. Having worked with several different users in the past, I knew several of the pitfalls that users may encounter. However, I never really had asked users to record errors themselves. Still, I thought it would be easy to get the point across.

During the training session, I said that it was critical to record all necessary steps so that the developer could recreate the bug. I told the users that we often hear from users who simply say, “It doesn’t work,” and explained that the developers will not be able to fix a problem that was described so generically. The users said that they understood perfectly and were ready to start testing.

Bug Writing – Frustration

With 20 inexperienced testers working independently, it was important to filter the reported bugs before assigning them to the developers. I was responsible for triaging all bugs reported by the users. I verified that I could recreate bugs and eliminated duplicate bugs before assigning them to the developers. If I could not recreate a bug based on the instructions, I would reassign it to the user who reported it and ask for more detail. I assumed that the users would quickly realize when they were not providing sufficient detail and start writing perfect bugs after a day or two.

I assumed wrong.

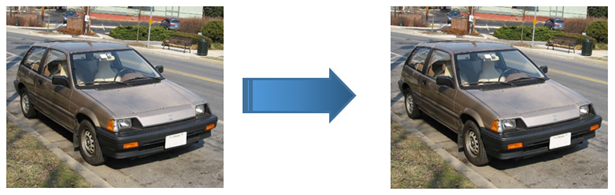

Although a few users were writing clear, reproducible bugs, most were writing ones that were way too generic. For example:

- I was on the “Choose a Course” screen.

- When I chose a course, I got an error.

In this case, when I tried to reproduce the bug, I did not receive an error. So, I sent the bug back to the user requesting more detail. In most cases, the first time a bug was recorded, I had to reject it. In many cases, I had to reject the rewrite as well. Pretty quickly, the users got fed up with me and complained to their manager that I was rejecting their bugs.

Enlightenment

I realized that I needed to find a way to illustrate to the users exactly what was needed in a clear, reproducible bug report. I developed the following simple exercise that was very effective. I have used it on all subsequent large-scale UAT efforts with the same success.

I ask the users to do the following with their first 5 bug reports:

- Record the bug as clearly as you can and assign it to yourself.

- Wait 5 hours.

- Try to recreate the bug following only the information that you recorded.

- If you are able to recreate the bug, assign it to me. Otherwise, go back to step 1.

It is important that the users wait some amount of time before trying to recreate the bug. I found that when they try to recreate the bugs immediately, the steps are too fresh in their mind to truly follow only what they wrote. They seem to fill in the gaps without realizing it.

I have found that once users complete this exercise, they are able to consistently write bugs that have enough detail to be reproducible. They do not become expert testers, of course, but they are able to write a useful bug.

I’m interested in hearing about other people’s experiences as well.

What concepts have you found especially difficult to get across to people performing UAT for the first time?

Were you able to find a technique that helped the users better understand the concept?